Prompt Management

for the Agentic Stack

One server to manage, test, evaluate, and run prompts across multiple LLM providers.

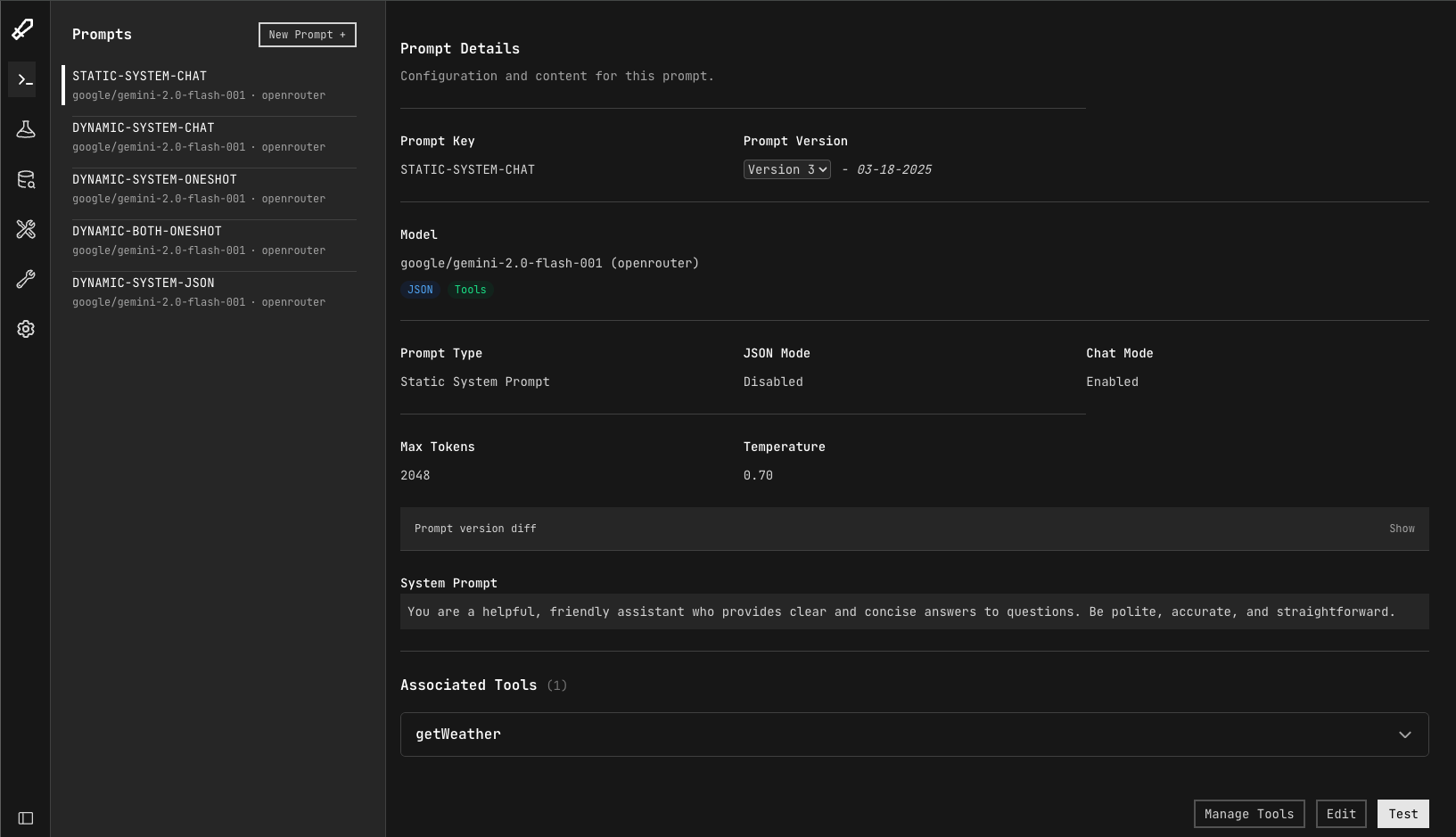

Manage prompts the right way

The key to building successful products powered by LLM's is managing your prompts effectively. We give you all the tools to do that.

- Prompt management

- Create prompts for any model. Use our handlebar jinja inspired templating syntax. Test and configure easily.

- Evaluations

- Create evals and run them for every prompt version so you can track your prompt performance over time.

- Versioning

- Have peace of mind knowing you can always roll back to previous prompt versions at anytime.

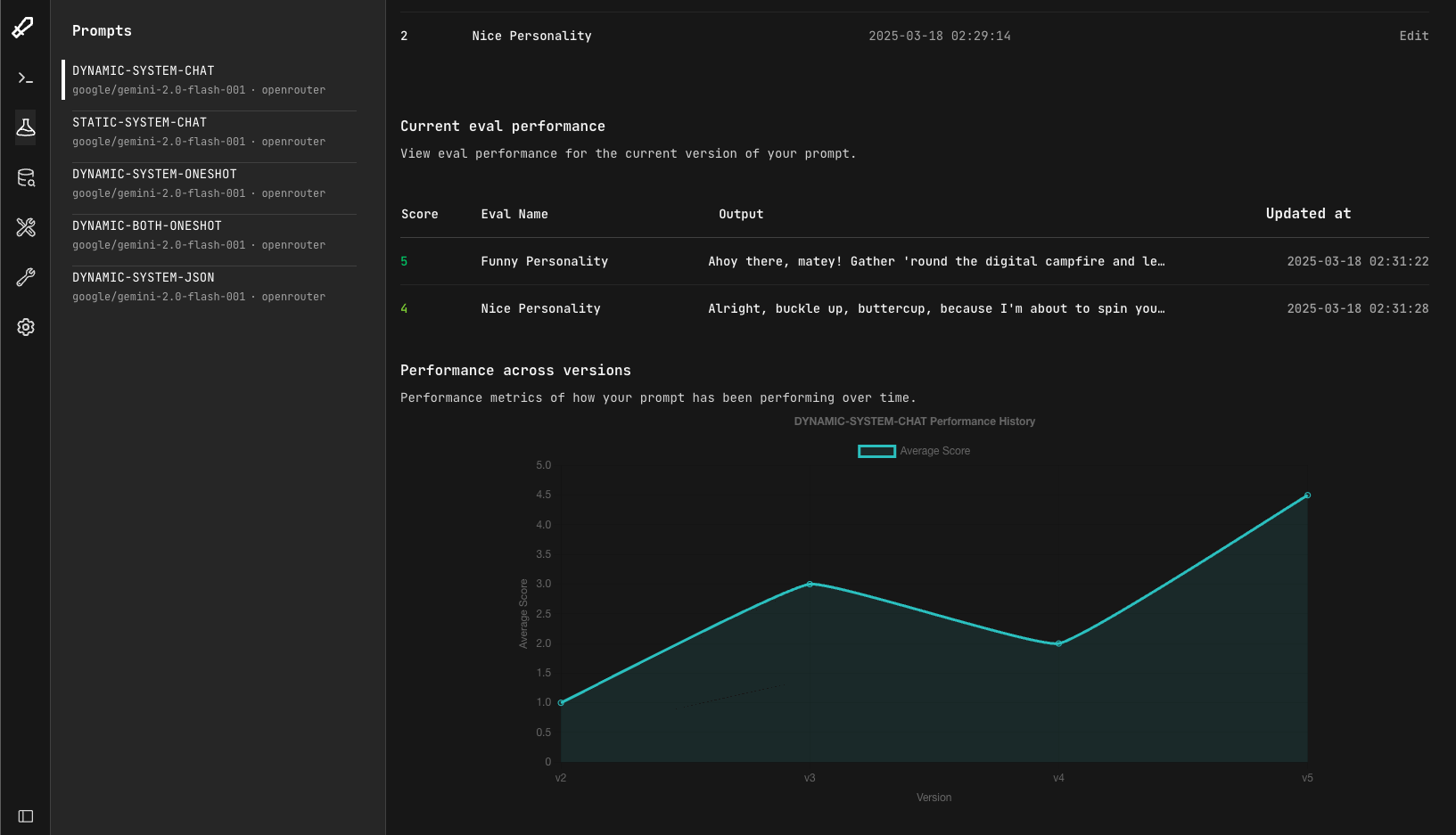

Evals. Evals. Evals.

At the core of llmkit is the ability to create evals and then run them everytime your prompt version changes. In this way, you can track the performance of your prompts over time.

- 1 Create evals for each prompt

- to test a wide variety of circumstances.

- 2 Run evals on each prompt version

- to ensure every prompt version is meeting your customer's needs.

- 3 Evaluation and track

- over time how your prompts perform.

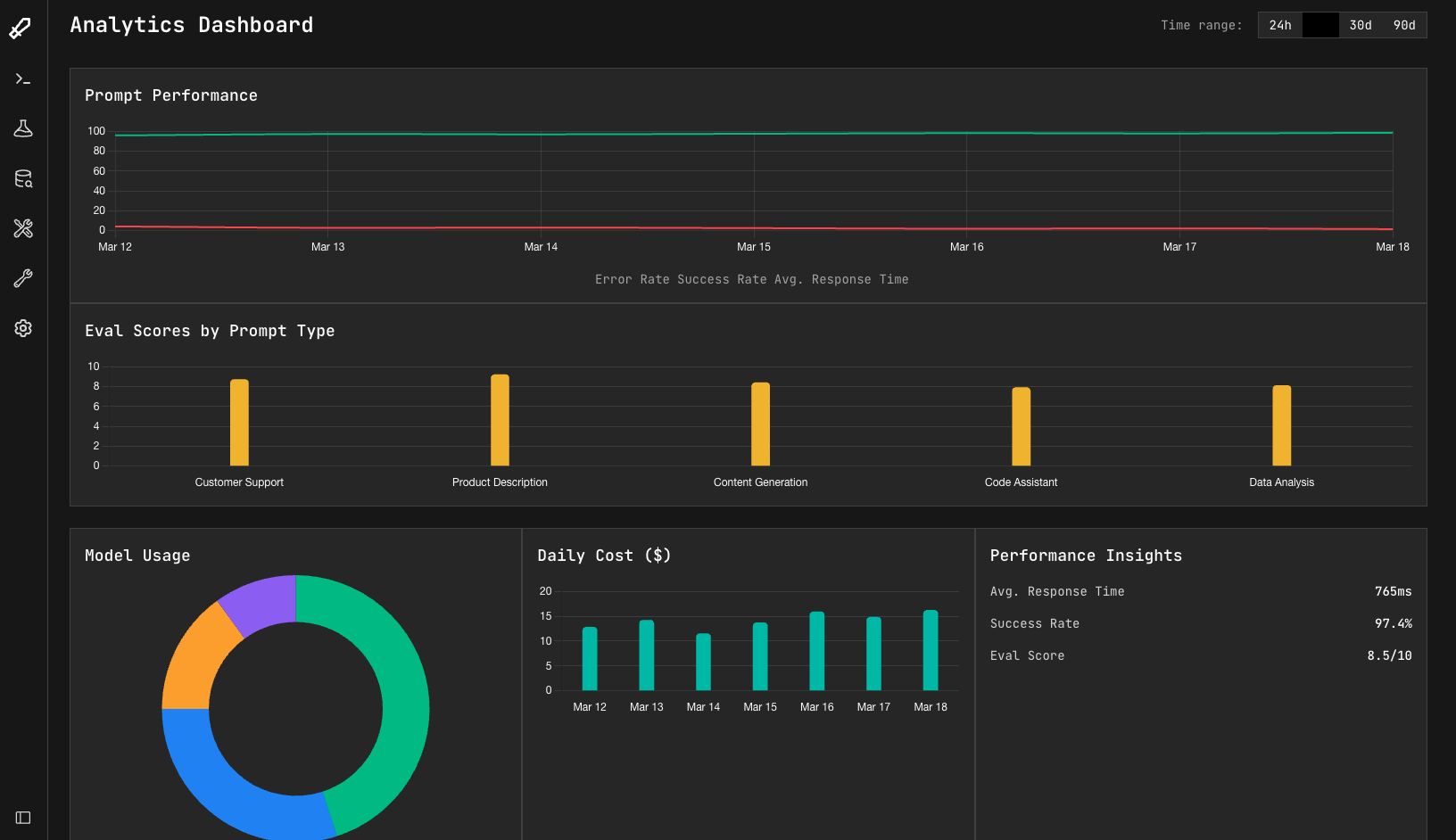

Llmkit as a Service

Our hosted version of llmkit not only removes the burden of deployment but also comes with extra features.

- Effortless deployment

- Create an account, get your keys, and get to work.

- Analytics Dashboards

- A centralized command post to see how your prompts are performing, provider latency, and cost.

- Advanced evals

- A enhanced eval suite with tool calling support and rule based automatic evaluations.

- MCP Servers

- Install MCP servers and enable your prompts to have access to entire tools suites in one click.

Need more help?

Our team offers specialized consulting services to help you get the most out of your LLM implementations

Prompt Engineering

Expert guidance on crafting effective prompts that deliver consistent, high-quality results.

LLM Integration

Custom solutions for integrating LLMs into your existing workflows and applications.

Performance Optimization

Strategies to reduce costs and improve response quality across your LLM implementations.

Get in touch

Have questions about llmkit? Whether you're curious about features, a custom deployment, or anything else, our team is ready to answer all your questions.

- GitHub

- github.com/llmkit-ai